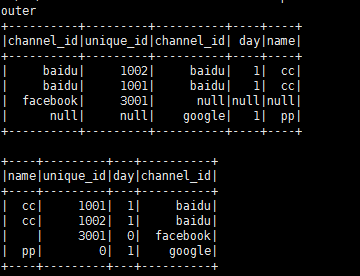

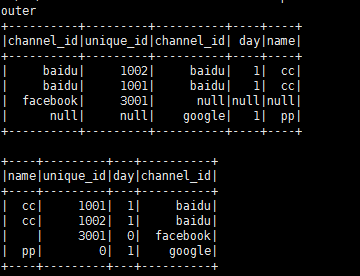

新建df1 和 df2 两个数据源,指定数据源的中的列名和列的类型。用相同列“chanel_id”做关联,进行join outer查询, 在select取值的时候,用自定义的udf函数(get_channel_id),取两个表中不为空的“channel_id”作为结果集的数据。

用fillna 替换结果集中的null值

----------------------------------------------------------------------

from pyspark.sql.functions import udf

df1 = spark.createDataFrame([('baidu', 1001), ('baidu', 1002), ('facebook', 3001)],

"channel_id: string, unique_id: int")

df2 = spark.createDataFrame([('baidu', 1, 'cc'), ('google', 1, 'pp')],

"channel_id: string, day: int, name: string")

print('outer')

outer_df = df1.join(df2, df1.channel_id == df2.channel_id, 'outer')

outer_df.show()

@udf

def get_channel_id(a, b):

if a is not None:

return a

if b is not None:

return b

outer_df.select(df2.name, df1.unique_id, df2.day, get_channel_id(df1.channel_id, df2.channel_id).alias('channel_id'))

.fillna({"unique_id": 0, "day": 0, "name": ""})

.show()

打印结果: