HBase 数据迁移实战案例

作者:尹正杰

版权声明:原创作品,谢绝转载!否则将追究法律责任。

通过HBase的相关JavaAPI,我们可以实现伴随HBase操作的MapReduce过程,比如使用MapReduce将数据从本地文件系统导入到HBase的表中,比如我们从HBase中读取一些原始数据后使用MapReduce做数据分析。

一.官方HBase-MapReduce案例

博主推荐阅读: http://hbase.apache.org/2.2/book.html#mapreduce

1>.统计"yinzhengjie2020:teacher"表中有多少行数据(需要同时启动Hadoop,Hbase集群)

[root@hadoop101.yinzhengjie.org.cn ~]# yarn jar /yinzhengjie/softwares/hbase/lib/hbase-server-2.2.4.jar org.apache.hadoop.hbase.mapreduce.RowCounter yinzhengjie2020:teacher

2>.使用MapReduce将本地数据导入到HBase

[root@hadoop101.yinzhengjie.org.cn ~]# vim fruit.tsv [root@hadoop101.yinzhengjie.org.cn ~]# [root@hadoop101.yinzhengjie.org.cn ~]# cat fruit.tsv 1001 Apple Red 1002 Pear Yellow 1003 Pineapple Yellow [root@hadoop101.yinzhengjie.org.cn ~]#

[root@hadoop101.yinzhengjie.org.cn ~]# hdfs dfs -mkdir /input_fruit/ [root@hadoop101.yinzhengjie.org.cn ~]# [root@hadoop101.yinzhengjie.org.cn ~]# ll total 4 -rw-r--r-- 1 root root 54 May 29 18:50 fruit.tsv [root@hadoop101.yinzhengjie.org.cn ~]# [root@hadoop101.yinzhengjie.org.cn ~]# [root@hadoop101.yinzhengjie.org.cn ~]# hdfs dfs -put fruit.tsv /input_fruit/ [root@hadoop101.yinzhengjie.org.cn ~]# [root@hadoop101.yinzhengjie.org.cn ~]# hdfs dfs -ls /input_fruit/ Found 1 items -rw-r--r-- 3 root supergroup 54 2020-05-29 18:55 /input_fruit/fruit.tsv [root@hadoop101.yinzhengjie.org.cn ~]#

[root@hadoop101.yinzhengjie.org.cn ~]# yarn jar /yinzhengjie/softwares/hbase/lib/hbase-server-2.2.4.jar org.apache.hadoop.hbase.mapreduce.ImportTsv -Dimporttsv.columns=HBASE_ROW_KEY,info:name,info:color fruit hdfs://hadoop106.yinzhengjie.org.cn:9000/input_fruit

二.自定义HBase-MapReduce案例

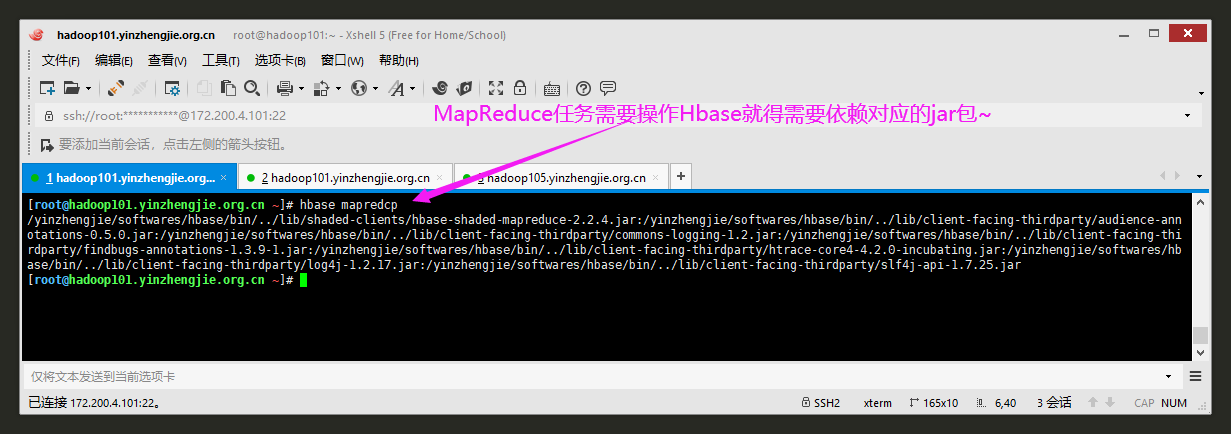

1>.查看Hadoop的mapreduce执行任务所需要HBase的依赖包(也可以说是类路径)

[root@hadoop101.yinzhengjie.org.cn ~]# hbase mapredcp /yinzhengjie/softwares/hbase/bin/../lib/shaded-clients/hbase-shaded-mapreduce-2.2.4.jar:/yinzhengjie/softwares/hbase/bin/../lib/client-facing-thirdparty/audience-ann otations-0.5.0.jar:/yinzhengjie/softwares/hbase/bin/../lib/client-facing-thirdparty/commons-logging-1.2.jar:/yinzhengjie/softwares/hbase/bin/../lib/client-facing-thirdparty/findbugs-annotations-1.3.9-1.jar:/yinzhengjie/softwares/hbase/bin/../lib/client-facing-thirdparty/htrace-core4-4.2.0-incubating.jar:/yinzhengjie/softwares/hbase/bin/../lib/client-facing-thirdparty/log4j-1.2.17.jar:/yinzhengjie/softwares/hbase/bin/../lib/client-facing-thirdparty/slf4j-api-1.7.25.jar[root@hadoop101.yinzhengjie.org.cn ~]#

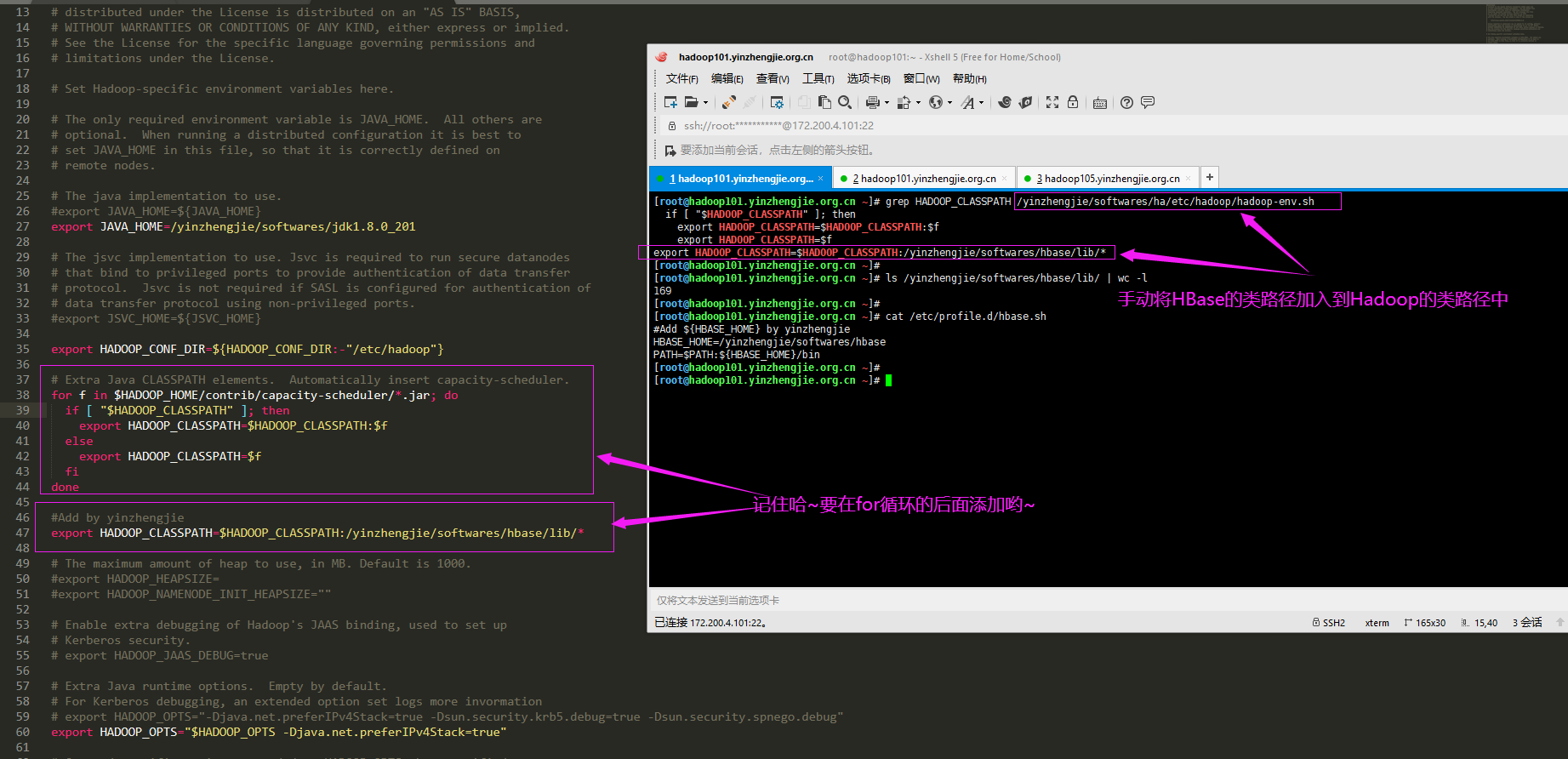

2>.配置环境变量

[root@hadoop101.yinzhengjie.org.cn ~]# cat /etc/profile.d/hbase.sh #Add ${HBASE_HOME} by yinzhengjie HBASE_HOME=/yinzhengjie/softwares/hbase PATH=$PATH:${HBASE_HOME}/bin [root@hadoop101.yinzhengjie.org.cn ~]#

[root@hadoop101.yinzhengjie.org.cn ~]# cat /yinzhengjie/softwares/ha/etc/hadoop/hadoop-env.sh #!/bin/bash # Licensed to the Apache Software Foundation (ASF) under one # or more contributor license agreements. See the NOTICE file # distributed with this work for additional information # regarding copyright ownership. The ASF licenses this file # to you under the Apache License, Version 2.0 (the # "License"); you may not use this file except in compliance # with the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. # Set Hadoop-specific environment variables here. # The only required environment variable is JAVA_HOME. All others are # optional. When running a distributed configuration it is best to # set JAVA_HOME in this file, so that it is correctly defined on # remote nodes. # The java implementation to use. #export JAVA_HOME=${JAVA_HOME} export JAVA_HOME=/yinzhengjie/softwares/jdk1.8.0_201 # The jsvc implementation to use. Jsvc is required to run secure datanodes # that bind to privileged ports to provide authentication of data transfer # protocol. Jsvc is not required if SASL is configured for authentication of # data transfer protocol using non-privileged ports. #export JSVC_HOME=${JSVC_HOME} export HADOOP_CONF_DIR=${HADOOP_CONF_DIR:-"/etc/hadoop"} # Extra Java CLASSPATH elements. Automatically insert capacity-scheduler. for f in $HADOOP_HOME/contrib/capacity-scheduler/*.jar; do if [ "$HADOOP_CLASSPATH" ]; then export HADOOP_CLASSPATH=$HADOOP_CLASSPATH:$f else export HADOOP_CLASSPATH=$f fi done #Add by yinzhengjie export HADOOP_CLASSPATH=$HADOOP_CLASSPATH:/yinzhengjie/softwares/hbase/lib/* # The maximum amount of heap to use, in MB. Default is 1000. #export HADOOP_HEAPSIZE= #export HADOOP_NAMENODE_INIT_HEAPSIZE="" # Enable extra debugging of Hadoop's JAAS binding, used to set up # Kerberos security. # export HADOOP_JAAS_DEBUG=true # Extra Java runtime options. Empty by default. # For Kerberos debugging, an extended option set logs more invormation # export HADOOP_OPTS="-Djava.net.preferIPv4Stack=true -Dsun.security.krb5.debug=true -Dsun.security.spnego.debug" export HADOOP_OPTS="$HADOOP_OPTS -Djava.net.preferIPv4Stack=true" # Command specific options appended to HADOOP_OPTS when specified export HADOOP_NAMENODE_OPTS="-Dhadoop.security.logger=${HADOOP_SECURITY_LOGGER:-INFO,RFAS} -Dhdfs.audit.logger=${HDFS_AUDIT_LOGGER:-INFO,NullAppender} $HADOOP_NAMENODE_OPTS" export HADOOP_DATANODE_OPTS="-Dhadoop.security.logger=ERROR,RFAS $HADOOP_DATANODE_OPTS" export HADOOP_SECONDARYNAMENODE_OPTS="-Dhadoop.security.logger=${HADOOP_SECURITY_LOGGER:-INFO,RFAS} -Dhdfs.audit.logger=${HDFS_AUDIT_LOGGER:-INFO,NullAppender} $HADOOP_SECONDARYNAMENODE_OPTS" export HADOOP_NFS3_OPTS="$HADOOP_NFS3_OPTS" export HADOOP_PORTMAP_OPTS="-Xmx512m $HADOOP_PORTMAP_OPTS" # The following applies to multiple commands (fs, dfs, fsck, distcp etc) export HADOOP_CLIENT_OPTS="$HADOOP_CLIENT_OPTS" # set heap args when HADOOP_HEAPSIZE is empty if [ "$HADOOP_HEAPSIZE" = "" ]; then export HADOOP_CLIENT_OPTS="-Xmx512m $HADOOP_CLIENT_OPTS" fi #HADOOP_JAVA_PLATFORM_OPTS="-XX:-UsePerfData $HADOOP_JAVA_PLATFORM_OPTS" # On secure datanodes, user to run the datanode as after dropping privileges. # This **MUST** be uncommented to enable secure HDFS if using privileged ports # to provide authentication of data transfer protocol. This **MUST NOT** be # defined if SASL is configured for authentication of data transfer protocol # using non-privileged ports. export HADOOP_SECURE_DN_USER=${HADOOP_SECURE_DN_USER} # Where log files are stored. $HADOOP_HOME/logs by default. #export HADOOP_LOG_DIR=${HADOOP_LOG_DIR}/$USER # Where log files are stored in the secure data environment. #export HADOOP_SECURE_DN_LOG_DIR=${HADOOP_LOG_DIR}/${HADOOP_HDFS_USER} ### # HDFS Mover specific parameters ### # Specify the JVM options to be used when starting the HDFS Mover. # These options will be appended to the options specified as HADOOP_OPTS # and therefore may override any similar flags set in HADOOP_OPTS # # export HADOOP_MOVER_OPTS="" ### # Router-based HDFS Federation specific parameters # Specify the JVM options to be used when starting the RBF Routers. # These options will be appended to the options specified as HADOOP_OPTS # and therefore may override any similar flags set in HADOOP_OPTS # # export HADOOP_DFSROUTER_OPTS="" ### ### # Advanced Users Only! ### # The directory where pid files are stored. /tmp by default. # NOTE: this should be set to a directory that can only be written to by # the user that will run the hadoop daemons. Otherwise there is the # potential for a symlink attack. #export HADOOP_PID_DIR=${HADOOP_PID_DIR} export HADOOP_PID_DIR=/yinzhengjie/softwares/hadoop-2.10.0/pid export HADOOP_SECURE_DN_PID_DIR=${HADOOP_PID_DIR} # A string representing this instance of hadoop. $USER by default. export HADOOP_IDENT_STRING=$USER [root@hadoop101.yinzhengjie.org.cn ~]#

3>.查看原表数据

hbase(main):001:0> list TABLE engineer teacher yinzhengjie2020:teacher 3 row(s) Took 0.3388 seconds => ["engineer", "teacher", "yinzhengjie2020:teacher"] hbase(main):002:0>

hbase(main):002:0> describe 'yinzhengjie2020:teacher' Table yinzhengjie2020:teacher is ENABLED yinzhengjie2020:teacher COLUMN FAMILIES DESCRIPTION {NAME => 'synopsis', VERSIONS => '1', EVICT_BLOCKS_ON_CLOSE => 'false', NEW_VERSION_BEHAVIOR => 'false', KEEP_DELETED_CELLS => 'FALSE', CACHE_DATA_ON_WRITE => 'false', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', MIN_VERSIONS => '0', REPLICATION_SCOPE => '0', BLOOMF ILTER => 'ROW', CACHE_INDEX_ON_WRITE => 'false', IN_MEMORY => 'false', CACHE_BLOOMS_ON_WRITE => 'false', PREFETCH_BLOCKS_ON_OPEN => 'false', COMPRESSION => 'NONE', BLOCKCACHE => 'true', BLOCKSIZE => '65536'} 1 row(s) QUOTAS 0 row(s) Took 0.2182 seconds hbase(main):003:0>

hbase(main):003:0> scan 'yinzhengjie2020:teacher' ROW COLUMN+CELL 100001 column=synopsis:name, timestamp=1590715486602, value=JasonYin 10010 column=synopsis:name, timestamp=1590726110051, value=xE5xB0xB9xE6xADxA3xE6x9DxB0 2 row(s) Took 0.0478 seconds hbase(main):004:0>

4>.新建目标表

hbase(main):005:0> create 'yinzhengjie2020:student','synopsis' Created table yinzhengjie2020:student Took 1.3685 seconds => Hbase::Table - yinzhengjie2020:student hbase(main):006:0>

hbase(main):006:0> list TABLE engineer teacher yinzhengjie2020:student yinzhengjie2020:teacher 4 row(s) Took 0.0091 seconds => ["engineer", "teacher", "yinzhengjie2020:student", "yinzhengjie2020:teacher"] hbase(main):007:0>

hbase(main):007:0> describe 'yinzhengjie2020:student' Table yinzhengjie2020:student is ENABLED yinzhengjie2020:student COLUMN FAMILIES DESCRIPTION {NAME => 'synopsis', VERSIONS => '1', EVICT_BLOCKS_ON_CLOSE => 'false', NEW_VERSION_BEHAVIOR => 'false', KEEP_DELETED_CELLS => 'FALSE', CACHE_DATA_ON_WRITE => 'false', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', MIN_VERSIONS => '0', REPLICATION_SCOPE => '0', BLOOMF ILTER => 'ROW', CACHE_INDEX_ON_WRITE => 'false', IN_MEMORY => 'false', CACHE_BLOOMS_ON_WRITE => 'false', PREFETCH_BLOCKS_ON_OPEN => 'false', COMPRESSION => 'NONE', BLOCKCACHE => 'true', BLOCKSIZE => '65536'} 1 row(s) QUOTAS 0 row(s) Took 0.0651 seconds hbase(main):008:0>

hbase(main):008:0> scan 'yinzhengjie2020:student' ROW COLUMN+CELL 0 row(s) Took 0.0765 seconds hbase(main):009:0>

5>.编写MapReduce程序实现将"yinzhengjie2020:teacher"表中的数据迁移至"yinzhengjie2020:student"表中